For months during the summer of 2024, Jarmarus Brown, an Orange City, Florida police officer, ran his ex-girlfriend's license plate through the Flock automated license plate reader (ALPR) system lookup database at least 69 times. He searched for the license plate belonging to her mom at least 24 times, and searched for the license plate belonging to her dad at least 15 times. Brown’s searches were happening so often, and were so commonplace, that even one of his colleagues noticed Brown researching his ex-girlfriend's whereabouts while the law enforcement officers sat in their police cruisers, according to court records obtained by 404 Media.

“While they were sitting there, Officer [Shadrich] King noticed Jarmarus was on the Flock system and a license plate reader image of [Brown’s ex-girlfriend] was on the screen,” a police affidavit about Brown’s behavior obtained by 404 Media reads. “Officer King said he mentioned to Jarmarus that he needed to stop running her vehicle in that system because he could get in trouble. Jarmarus responded saying that he knew that, and he was going to stop.” Flock’s automated license plate readers document every car that drives past them, creating a broad network of people’s movements around the country. Police can then look up license plates to learn where a specific car and, by extension, person, has traveled over time.

On another occasion, Brown told King that he believed his ex was lying about her whereabouts. She “told Jarmarus she was at her house with her mother, but Jarmarus knew for a fact she was not. When questioned by Officer King as to how he knew for a fact she was lying, Jarmarus said he used the Flock system and saw that her vehicle was elsewhere,” the affidavit reads. “Jarmarus then asked Officer King if he wanted to join him on a ‘stakeout’ to try to see where her vehicle was located.”

According to Brown’s ex-girlfriend, while they were dating he would “constantly require [her] to either be on FaceTime with him or be on the phone with him, even while she was working […] Jarmarus would try to control aspects of [her] life, such as the amount of makeup she would wear and the length of her fingernails.” According to the affidavit, Brown’s stalking extended beyond license place lookups; at one point while they were dating, he put an Apple AirTag in her wallet. But the bulk of his surveillance came through Flock, the affidavit says, noting that he kept “randomly showing up at the places she was at.”

The affidavit states Brown told investigators that “he would occasionally run her tag through Flock to track her whereabouts” because he believed she was lying to him. “It was dumb as hell on my end, emotions flowing, mind going,” he told investigators. The investigators ultimately determined Brown “knowingly and intentionally accessed the password protected computer systems, Flock and DAVID [a Florida DMV vehicle information database], to run the license plates of vehicles [she] frequently drove, for his own personal reasons. There was no work related, justifiable, reasons to do so, other than to track [her] whereabouts.” Brown was ultimately charged with stalking and hacking-related charges; he served one day in prison and was sentenced to five years of probation.

Brown’s case was not a one-off. Local news reports from around the country repeatedly detail police abusing the Flock surveillance systemic order to stalk their partners or ex-partners. The contours of each story are much the same, with the police officer in question using their access to the system to repeatedly track a specific person over the course of weeks or months. The cases highlight the fact that Flock can be used to track the whereabouts of individual people, that police do not get a warrant in order to use the system, and that, if they have access to the system, they have the technical ability to look up any license plate they want for any reason they want. An April study by the civil rights group Institute for Justice found that at least 18 police officers have been caught around the country using Flock to stalk a romantic interest in the last few years; another database, called the ALPR Abuse Library, has documented 20 specific cases of “stalking/targeting” around the country.

The known cases of police stalking are almost certainly a vast underreporting of the overall abuse, because they largely include only cases in which the behavior was so egregious that it led to police officers being fired, arrested, or both.

Flock told 404 Media that it is “aware of 15 incidents of abuse, each surfaced because of the transparency and accountability features deliberately built into our platform.”

“There are also 140,000 monthly active users of Flock, so the relatively rare instances of abuse, while obviously wrong and awful, are exactly that—rare,” a Flock spokesperson told 404 Media. “Humans are fallible; unlike most tools society provide law enforcement, Flock ensures that in the instances when our technology is misused, the evidence used to hold responsible parties accountable, is right there in our system. We also encourage all our customers to have a usage policy, regular training, and to implement our Audit Assistance tool, which proactively flags unintended use.”

It is definitely the case that Flock’s audit tools have proven useful in holding police accountable, because journalists, activists, and concerned citizens from around the country have pored through Flock audit logs that they have obtained through public records requests to document abuse. But it is also the case that Flock has strenuously fought against lawsuits and potential regulations that are seeking to require police to get a warrant to use the system. And many cases of abuse have not been detected by police departments themselves but by those private citizens, journalists, and stalking victims who have found patterns of abuse in public records files they have obtained from their local police departments. In most cases of Flock-related stalking reviewed by 404 Media, the abuse occurred over the course of months or years, and the victims were subjected to dozens or hundreds of lookups.

Other abuse cases have been discovered using the website HaveIBeenFlocked.com, a website that compiles Flock searches released via public records requests and turns them into a searchable database. Flock has repeatedly tried to get that website taken down, as we have previously reported.

In Wisconsin, a stalking victim checked her own license plate on HaveIBeenFlocked.com and learned that City of Milwaukee Police Officer Josue Ayala had searched her license plate more than 100 times. After reporting this alleged abuse to the police, the agency ran its own audit and learned that Ayala had also searched the license plate of a second victim 124 times in a two-month span last year, according to court records. Each time, Ayala simply listed “investigation” as the reason for his search. In another alleged abuse case in Idaho, the police chief used Flock to allegedly stalk his wife using the reason “test” in the Flock system.

A citizens’ anti-surveillance organizing group, called Deflock Joplin, found anomalous searches by a police officer in Joplin, Missouri, last year. Using Flock audit logs they obtained using a public records request, they found one single license plate that was searched by one specific police officer 395 times in a 10-month span in 2025; they found that a second plate had been searched 147 times (the police officer’s name was redacted in the records).

“The activity presented here is startling and damning,” Deflock Joplin wrote in a blog about its investigation. “One user's account at JPD has surveilled people for around a year without detection. We see no conceivable way the Joplin Police Department is auditing these logs. This activity was blatant and obvious if anyone had bothered to take a look. We were able to find this data, file records requests, create a website, and share them in our spare time […] This system must be removed or severely curtailed to protect residents and their privacy.”

Soon after Deflock Joplin shared its findings with the city, the police officer in question was fired: “During that investigation, it was found that this single Joplin Police Officer did violate the policy regarding department equipment and systems,” the city wrote in a press release. “Any misuse of the Flock system or any other Joplin Police resource will not be tolerated, and discipline will be administered swiftly and in accordance with policy.”

In Orange City, Florida, Brown’s ex suspected she was being stalked and spoke to a friend within the police department, who told her that Brown “used law enforcement databases to track her whereabouts.” She then made a stalking complaint, which started the investigation, according to the affidavit.

In Coffee County, Georgia, officer Chris Rozar was charged with eight crimes, including computer invasion of privacy, prohibited use of captured license plate data, and stalking, because he allegedly “did knowingly misuse the Coffee County Sheriff’s Office Flock Law Enforcement Camera System and Tag Reader System […] for the purposes of stalking,” and that he “did follow, track, and surveil [the victim] throughout multiple locations in Coffee County, without the consent of said person, for the purpose of harassing and intimidating said person.” This case, too, was not discovered through Flock’s auditing tools: “The investigation began about two years ago after a woman came forward with allegations that Rozar had [been] stalking her,” a press release about Rozar’s arrest reads.

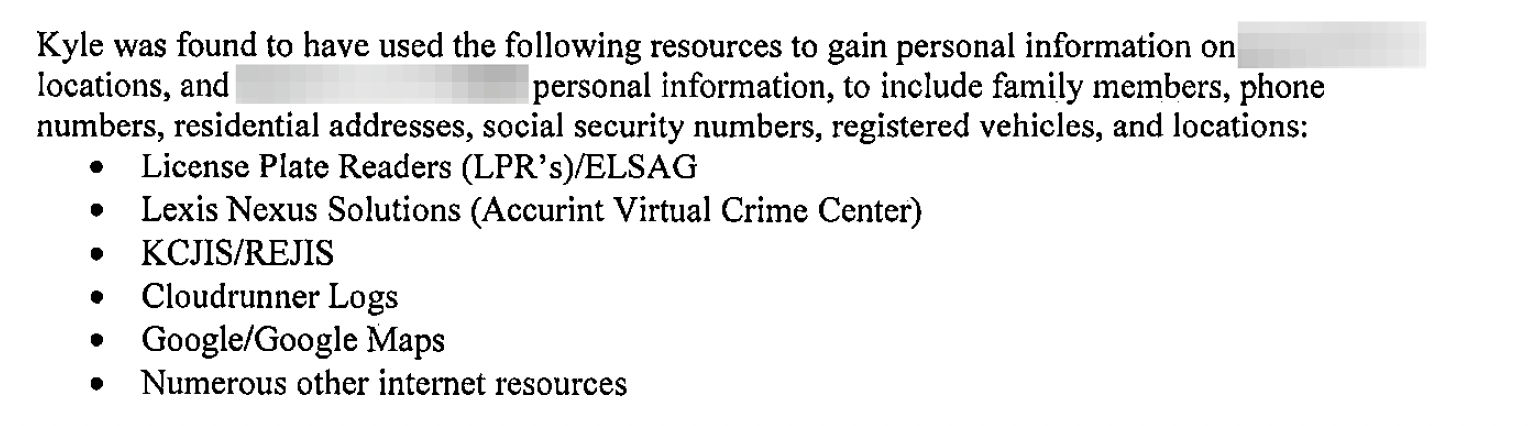

In Bonner Springs, Kansas, a police officer allegedly used Leonardo-brand license plate reader cameras to stalk his ex wife as part of a horrifying and extensive hacking and spying campaign; the officer was also found to have beastiality and child sexual abuse material on his devices.

There are more than a dozen other cases from around the country where the story is much the same; a police officer stalks their partner or an ex for months before ultimately getting caught and fired or arrested. These cases repeatedly show that, because there are few limits on what police can use Flock for, they are often able to abuse the system for months or years before being caught.

Many of the known cases of police abuse were only discovered after the victim reported being stalked or after data crunching by journalists or local government transparency groups; many of the cases of abuse happened over the course of months. 404 Media is also aware of several instances in which an officer improperly used Flock and was simply warned or made to take leave, which did not rise to the level of being arrested or fired. 404 Media is also aware of at least one case that has not yet been reported in the media; in Dunwoody, Georgia, several police officers were fired or made to resign for improperly researching people through the Georgia Crime Information Center, a state database. At least one of the fired officers also improperly searched the city's Flock cameras, according to an internal investigative report shared with 404 Media by Jason Hunyar, a Dunwoody resident who has been investigating Flock. Dunwoody has a very close relationship with Flock and the company used Dunwoody as a demonstration for other police departments during sales pitches until Hunyar discovered that the company was accessing cameras in a children's gymnasium during these sales pitches.

“The fundamental problem with these systems is that they place private information about people’s movements over time in the hands of every officer,” Michael Soyfer, an Institute for Justice attorney, said in the organization’s report. “Without the constitutional safeguard of a warrant requirement, that predictably allows officers to abuse their access to these systems for things like stalking romantic partners.”

(STACHY.DJ)

(STACHY.DJ) Is Stronger Than Pride (Alles Ist Musik)

Is Stronger Than Pride (Alles Ist Musik)